AI Agents Are Moving Into Daily Work Even as Safety Reviews Warn of Gaps

Companies are pushing agentic AI into everyday software while a major international safety review says governments still need a clearer picture of the risks.

More than 30 countries and international organizations backed the International AI Safety Report 2026, a review led by Turing Award winner Yoshua Bengio and published on Feb. 3. The report describes itself as the largest global collaboration on AI safety to date.

That same month and into early 2026, companies across Asia and the United States kept accelerating plans to put AI agents into consumer and office software. The Korea Times reported in January that Naver and Kakao were preparing broader agentic AI rollouts in 2026, including services designed to act on conversational context rather than only respond to prompts.

The two developments are moving in parallel, not in sequence. Governments and researchers are still trying to map the risks of general-purpose systems, while technology firms are already treating agents as the next interface battle.

That gap is visible in regional coverage. In Seoul, the question is often which domestic platform can turn AI assistance into a durable product before foreign rivals do. In Washington and Brussels, the emphasis is more often on guardrails, export controls and state capacity. In workplaces testing the tools, the issue is narrower: whether the systems complete routine tasks accurately enough to be trusted with more of them.

The International AI Safety Report does not endorse a single regulatory model, but its structure signals where governments think the pressure is building. The expert panel includes representatives from countries including China, India, Japan, New Zealand, Nigeria, South Korea, the United Kingdom, the United States and the European Union, according to the report website.

That list matters because the AI race is no longer being framed only as research competition. It is also being framed as a question of compute access, infrastructure, legal exposure and labor reorganization. The scan material reviewed by Albis showed that Asian coverage was more likely to connect AI deployment to energy demand, office productivity and platform competition, while Western reporting still gave more weight to regulation and geopolitical control of advanced hardware.

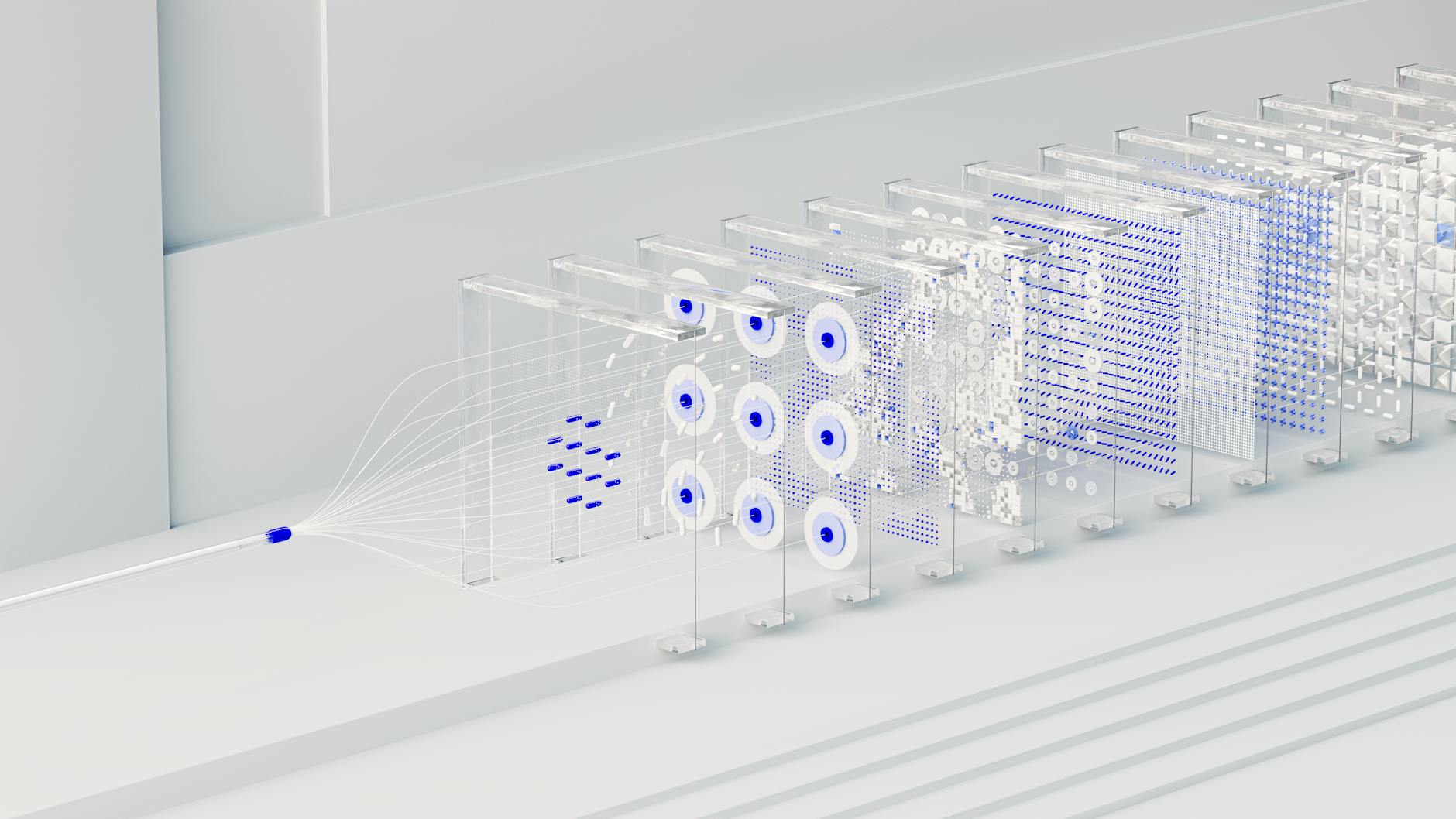

The commercial logic is straightforward. Agentic systems promise to move from helping workers write or summarize toward handling multistep tasks such as scheduling, searching, drafting and workflow execution. If those systems work consistently, they could change how software companies charge for productivity tools and how employers structure administrative work.

The technical problem is just as clear. An AI agent does not fail only when it produces a wrong sentence. It can fail when it chooses the wrong tool, misreads a constraint, completes the wrong task confidently or takes an action no human intended. Those are ordinary office errors made at machine speed.

That is why the safety discussion has widened beyond misinformation and frontier-model theory. Reliability, supervision and accountability are becoming operational issues. A chatbot that drafts a weak paragraph creates a nuisance. An agent that files the wrong request, triggers the wrong workflow or mishandles sensitive data creates an audit trail.

The political framing remains uneven. Chinese and Korean industry coverage often treats the current phase as an infrastructure and execution contest. European and Anglo-American policy forums are more likely to describe it as a governance challenge that must be contained before deployment spreads further.

Neither side is waiting for the other. Firms are shipping. Governments are writing reviews and rules. Workers are being asked to use the products before many institutions have agreed on how much autonomy they should be allowed.

The next marker will be product launches in the second quarter and the policy responses that follow them. If the new generation of agents performs well enough in ordinary work, deployment will accelerate. If reliability failures pile up in public, the language of convenience could give way to the language of liability.

Company Daily Scan

Track stories like this for your company.

Albis can turn the same global scan into a private daily briefing for your sector, regions, risks, and watchlist.

See how the company scan works →Sources for this article are being documented. Albis is building transparent source tracking for every story.

Get the daily briefing free

News from 7 regions and 16 languages, delivered to your inbox every morning.

Free · Daily · Unsubscribe anytime

🔒 We never share your email